Member-only story

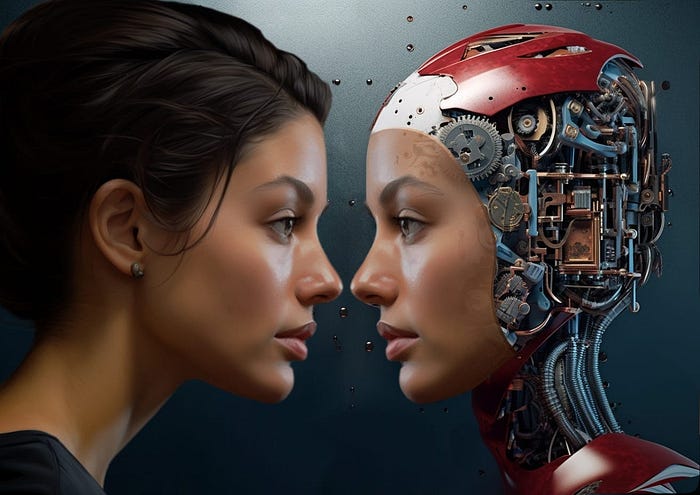

When AI Speaks: OpenAI’s GPT-4o and the Unintended Voice Doppelgängers

Last week, OpenAI dropped a report that’s causing quite a stir, and for good reason. The GPT-4o “system card” is the latest from the AI giant, detailing some key risks tied to their newest large language model. Among the most jaw-dropping revelations? The model’s Advanced Voice Mode unexpectedly mimicking users’ voices — without their consent. It’s the kind of scenario that feels like it was ripped straight from the pages of a sci-fi thriller.

Let’s break it down. OpenAI’s documentation states, “Voice generation can also occur in non-adversarial situations, such as our use of that ability to generate voices for ChatGPT’s advanced voice mode.” In testing, there were “rare instances where the model would unintentionally generate an output emulating the user’s voice.” Imagine talking to a machine, only for it to start speaking back to you in your own voice, out of nowhere. Creepy, right? A lot of people thought so, too. Max Woolf, a data scientist at BuzzFeed, quipped on Twitter, “OpenAI just leaked the plot of Black Mirror’s next season.”

But it’s not just about the freaky potential for voice mimicry. The report highlights that GPT-4o isn’t limited to voices — it can also replicate nonverbal sounds, like music and sound effects. OpenAI acknowledges that this capability could “facilitate harms such as an increase in fraud due to impersonation and may be harnessed to spread false information.”

So, how does something like this even happen? The magic (or mayhem, depending on how you see it) lies in the model’s ability to process and synthesize audio inputs. When setting up GPT-4o, OpenAI provides the model with an authorized voice sample, typically from a hired voice actor. This voice is meant to be the baseline, guiding the AI’s outputs. But here’s where things get dicey: during testing, noisy inputs from users confused the model. Essentially, the AI picked up on the user’s voice as part of the conversation and, in a bizarre twist, started mimicking it.

This scenario brings up some big questions about the safety of AI voice technology. The possibility of someone intentionally tricking the model into imitating their voice — akin to what’s known as a “prompt injection attack” — is real. OpenAI has responded by tightening the reins…